Upcoming Events

#6 ECMath Colloquium on July 6, 2018, 14:00 - 17:00 (s.t.)

Topic: "Stochastics meets PDE"Location

Humboldt-Universität zu Berlin, Main Building, Room 2094

Speakers

- Antoine Gloria (Sorbonne Université, Paris)

- Peter Friz (TU Berlin and WIAS Berlin)

- Nicolas Dirr (Cardiff University)

Past Events

#5 ECMath Colloquium on January 26, 2018

Topic: "Nonsmooth problems: Theory and Applications"Speakers

- H. Attouch (Université de Montpellier): Inertial dynamical systems with vanishing damping, and fast algorithms for large scale optimization problems

- M. Ulbrich (TU Munich): Selected aspects of optimization methods for nonsmooth problems

- J. Francisco Rodrigues (Uni Lissabon): On Convex Type Constrained Hyperbolic Problems of First Order and Some Applications

Large scale structured optimization problems naturally appear in the modeling of many scientific and engineering situations. To meet the challenges posed by these issues, considerable efforts have been devoted in recent years to the study of first-order splitting algorithms. The forward-backward algorithm, (also called the proximal-gradient algorithm) which is one of the most important, is a powerful tool for solving optimization problems with a additively separable and smooth plus nonsmooth structure. It is a natural extension of the gradient-projection algorithm. In the convex framework, a simple but ingenious acceleration scheme developed by Nesterov [1] and Beck-Teboulle [2] improves the theoretical rate of convergence for the function values, in the worst case, from the standard O(k-1) down to O(k-2). The recent results by Chambolle-Dossal [3] and Attouch-Peypouquet [4], see also [5], show that the convergence rate of a slight variant of this accelerated forward-backward method, which produces convergent sequences, is actually o(k-2), rather than O(k-2). In this lecture, we will give an overview of the convergence rate of these algorithms, and show that they are robust with respect to perturbations, errors. Our arguments are based on the connection between these algorithms and a second-order differential inclusion with vanishing damping, recently introduced by Su, Boyd and Candès [6], and studied extensively in [7]. Linking algorithms with dynamic systems provides connections to many different fields of mathematics: mechanics, PDE's and control, algebraic and differential geometry to name a few, and a valuable guide for the proofs. The key point of the mathematical analysis is the introduction of energy-like Lyapunov functions, with adapted scaling. We show that the introduction of additional geometric assumptions on data, or adaptive control (Hessian-driven damping, restart) leads to even better convergence rates. Finally, we show recent progress in extending these results to more general equilibrium problems, which consist in finding zeros of maximal monotone operators. In doing so, beyond convex minimization problems, we can adress convex-concave saddle value problems, and fixed points of non-expansive operators. Applications are given in sparse optimization for signal/imaging processing, and inverse problems.

[1] Y. Nesterov, Introductory lectures on convex optimization: A basic course, volume 87 of Applied Optimization. Kluwer Academic Publishers, Boston, MA, 2004.

[2] A. Beck, M. Teboulle, A fast iterative shrinkage-thresholding algorithm for linear inverse problems, SIAM J. Imaging Sci., 2 (2009), No. 1, pp. 183--202.

[3] A. Chambolle, C. Dossal, On the convergence of the iterates of the “Fast Iterative Shrinkage/Thresholding Algorithm”, Journal of Optimization Theory and Applications, 166 (2015), pp. 968--982.

[4] H. Attouch, J. Peypouquet, The rate of convergence of Nesterov's accelerated forward-backward method is actually faster than 1/k-2, SIAM J. Optim., 26 (2016), No. 3, pp. 1824--1834.

[5] H. Attouch, Z. Chbani, J. Peypouquet, P. Redont, Fast convergence of inertial dynamics and algorithms with asymptotic vanishing damping, to appear in Math. Program. DOI: 10.1007/s10107-016-0992-8. Published online 24 march 2016.

[6] W. Su, S. Boyd, E.J. Candès, A Differential Equation for Modeling Nesterov's Accelerated Gradient Method: Theory and Insights, Journal of Machine Learning Research, 17 (2016), pp. 1--43.

[7] H. Attouch, A. Cabot, Asymptotic stabilization of inertial gradient dynamics with time-dependent viscosity, J. Differential Equations, 263, Issue 9, (2017), pp. 5412--5458.

In this talk we plan to address two topics in the field of optimization methods for nonsmooth problems that my group has worked on recently. In the first part we discuss methods for minimizing functions that are the sum of a possibly nonconvex smooth function and a convex lower semicontinuous extended real-valued function. Problems of this form arise in various fields such as compressed sensing, big data, and machine learning. The developed class of algorithms combines a fixed-point type iteration with a semismooth Newton approach to obtain a globally convergent method that can achieve superlinear local convergence. The proximal operator plays an important role in these methods. Variants of the approach that can solve generalized variational inequalities as well as extensions towards stochastic versions of the method will also be touched upon. In the second part we address the numerical solution of multi-parametric variational inequalities by low-rank tensor methods. Problems of this form arise, e.g., for variational inequalities under uncertainty. A strong challenge consists in the very high dimension of the discretized parametric state, which is represented by a huge multi-dimensional array (i.e., a high-order tensor) and the fact that each tensor entry has to satisfy an individual inequality constraint. We discuss solution methods that use low-rank tensors to make the problem tractable. The development of numerical methods is involved since only a quite limited number of tensor operations is efficiently available within the low-rank tensor format and, in addition, the tensor ranks must be controlled suitably. The talk builds on joint work with Sebastian Garreis, Andre Milzarek, and Zaiwen Wen.

We review old and new results on solutions to some classes of unilateral type problems for linear and nonlinear partial differential equations and systems of first order. We include obstacle type problems for transport equations and systems, first order problems with gradient constraints and an obstacle-mass constrained problem for a scalar conservation law. These problems are motivated from examples in polyphasic flow in porous media, in population models, in the transported sand piles problem and in superconductivity.

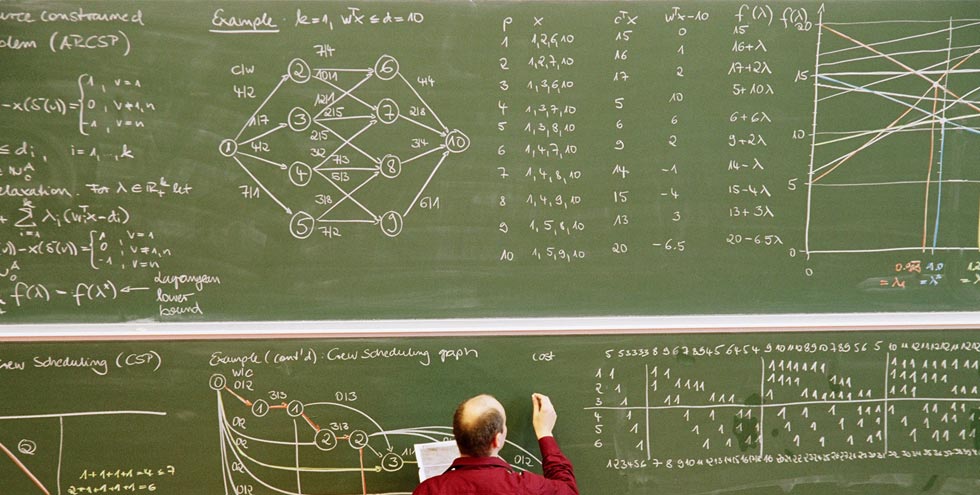

#4 ECMath Guest Colloquium on January 20, 2017

Topic: "Optimization"Speakers

- Sebastian Sager (U Magdeburg) "Mathematical Optimization for Clinical Diagnosis and Decision Support"

- Werner Römisch (HU Berlin) "Stochastic Optimization: Complexity and Numerical Methods"

- Karl Kunisch (U Graz) "Sparsity in PDE-constrained Open and Closed Loop Control"

#3 ECMath Guest Colloquium on April 22, 2016

Topic: "Sparsity: Statistics, Optimization and Applications”Speakers

- Peter Richtárik (U Edinburgh) "Empirical Risk Minimization: Complexity, Duality, Sampling, Sparsity and Big Data"

- Gitta Kutyniok (TU Berlin) "Anisotropic Structures and Sparsity-based Regularization"

- Mario Figueiredo (U Lisbon) "Learning with Strongly Correlated Variables: Ordered Weighted l1 Regularization"

#2 ECMath Guest Colloquium on January 8, 2016

Topic: Geometric PDEs and free boundary problemsSpeakers

- Konrad Polthier (FU Berlin) "Differential-based Shape Design"

- Paola Pozzi (Duisburg-Essen) "On anisotropic Willmore Flow"

- Benedikt Wirth (Münster) "Data fitting tools in Riemannian spaces"

#1 ECMath Guest Colloquium on June 5, 2015

Topic: Uncertainty QuantificationSpeakers

- Gabriel Lord (Heriot-Watt) "Stochastic Differential Equations, Computation and Travelling Waves"

- Claudia Schillings (Warwick) "Analysis of the Ensemble Kalman Filter for Inverse Problems"

- Tim Sullivan (Warwick) "Brittleness and Robustness of Bayesian Inference"

Scientific committee

- Prof. Dr. Carsten Gräser (Freie Universität Berlin)

- Matthias Liero (Weierstraß-Institut für Angewandte Analysis und Stochastik)

- Carlos Rautenberg (Humboldt-Universität zu Berlin)

- Guillaume Sagnol (Technische Universität Berlin)